What is the Largest Contentful Paint (LCP)?

The LCP is a key figure of the three Core Web Vital Metrics. All metrics evaluate the user experience (UX), not the actual loading time of the website. This is often mistakenly equated or confused.

Other Core Web Vital measured values are the First Input Delay (FID) and the Cumulative Layout Shift (CLS). In 2024, the FID will be replaced by the new KPI Interaction to Next Paint (INP). This is already displayed in Google PageSpeed Insights and the Google Search Console. The Core Web Vitals and the Google PageSpeed Score are Google ranking factors. The Core Web Vitals have a relatively strong influence on the Google PageSpeed Score. A fast website can have poor Core Web Vital and/or PageSpeed values.

In addition to the three Core Web Vitals, there are other Web Vitals: Total Blocking Time (TBT), Time to First Byte (TTFB), First Contentful Paint (FCP) and Time to Interactive (TTI). TTFB and FCP help diagnose problems with the LCP and are also related to the LCP, which means that a poor TTFB or FCP also has a negative effect on the LCP.

What is measured with the LCP?

The Largest Contentful Paint evaluates how long it takes to load the largest content element on the page. This can be an image, video or text.

What is a good LCP?

The LCP is a key figure that is measured in seconds as follows:

- 0 – 2.5 seconds: fast

- 2.5 – 4 seconds: mediocre

- over 4 seconds: slow

For at least 75% of users, the LCP should be a maximum of 2.5 seconds.

Tools: How can you measure the Largest Contentful Paint?

Laboratory data (Lighthouse)

The measurement and optimization of individual URLs under laboratory conditions can be carried out using the Google PageSpeed Insights tool. Here you can filter down to the individual causes in order to subsequently derive optimization measures. The measured values for the mobile devices are important here. These are normally always worse than the desktop measurements and therefore somewhat more difficult to optimize.

There are browser extensions for the browser (for example: https://chrome.google.com/webstore/detail/web-vitals/ahfhijdlegdabablpippeagghigmibma) with which you can easily recognize such Core Web Vital potentials when calling up a page. However, such tools only help to identify the need for optimization, not for the actual optimization process.

If a large number of URLs are to be checked for a page, it is advisable to carry out a bulk check via the Google PageSpeed API.

Field data/user data (CrUX)

In addition to the lab data, field data (data from real users over the last 28 days) is also available in the Google PageSpeed tool and in the Google Search Console for websites with sufficient user access. The advantage of the overview in the Google Search Console is that you are made aware of the problematic URLs with optimization potential without having to measure them individually using the Google PageSpeed Insights tool.

The data for the field data is collected via the Chrome User Experience Report (CrUX) from all users who use the Google Chrome browser.

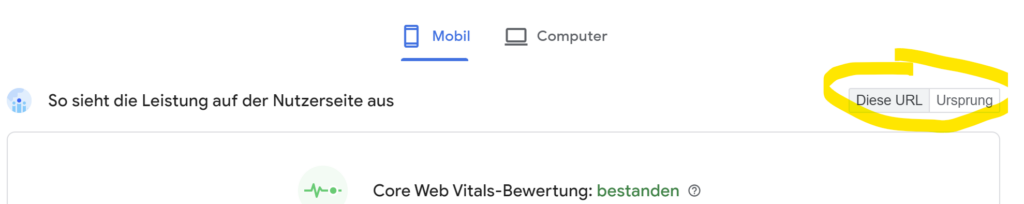

In the PageSpeed analysis, there are two relatively inconspicuous buttons below the end device selection. If neither of the two buttons is grayed out, you will see different CrUX values for all Core Web Vital KPIs by switching.

The CrUX data displayed in the PageSpeed Insights Report distinguishes between different data for the Core Web Vitals values:

- Mobile and desktop data for the URL to be measured (“This URL”)

- Mobile and desktop data for the entire website (“origin”)

If there is not enough data for an individual URL, the aggregated data for the entire website is automatically displayed, provided this data is available. This depends on the number of visitors to a website. As this data is dependent on end devices and URLs, it can vary greatly. It is particularly important to check whether an analysis shows data for the respective URL or not, as otherwise incorrect conclusions could be drawn from the analysis if aggregated data for the entire website were displayed instead.

Of course, the field data (real user data) displayed in Google Search Console is ultimately decisive. If an optimization measure has been implemented, you should then have the error correction checked by Google via the Google Search Console in order to have the successful error correction confirmed via the Google Search Console. As the user experience of real users is again important here, the review process takes up to 28 days. If the site does not have enough users during this period, it may not be possible to check an optimization measure.

For more precise determination of LCP data by real users, it is also advisable to use so-called RUM tools (Real User Monitoring).

Measures for LCP optimization

Before optimization, you should check whether both the field data and the lab data display similar values and that the data displayed applies to the individual URL and not the aggregated data (This URL / Origin). This way you can be sure that LCP optimization is really necessary for the respective page. The LCP filter in the PageSpeed analysis can be used to display the potential for improvement that specifically affects the LCP.

It is advisable to analyze and improve the entire charging process. To achieve a significant improvement in the Largest Contentful Paint, it is usually not enough to simply optimize the individual LCP resource.

Timing can be divided into the following four different phases:

TTFB (Time To First Byte)

The TTFB defines the time between the start of loading the page and the receipt of the first byte. The HTML document should be loaded as early as possible.

- Use and, if necessary, check the website caching: Some pages may be delivered without a cache version (dynamic); automatic and regular preloading of all pages can help here.

- The server hardware can also be a cause: Is the server perhaps generally too slow or frequently overloaded? Then a tariff or server change may be advisable.

- The HTML construct can also have a negative impact on the LCP if it is bloated, uncompressed or heavily nested. We recommend refactoring the code to make it leaner and reduce the DOM. The TTFB can also be improved by using compression tools such as GZIP, Deflate or Brotli.

- The use of a content delivery network can generally help to ensure rapid international availability of the website with all its resources. Especially if your own users access the website far away from the hosting server.

- To optimize the TTFB, the page should work without internal redirects. Sometimes this is the case for external scripts, for example. Forwarding ensures unnecessary additional latency.

Load Delay (loading delay)

The time between the TTFB and the start of the loading process of the relevant image.

- For this purpose, it is important that the URL of the LCP resource can be recognized by the browser via the HTML source code. Dynamic reloading of the resource via JavaScript or if the resource is a background image whose URL source is only defined in a CSS file without it being preloaded in the HTML code has a negative effect on the LCP because a CSS file or JavaScript must first be loaded before the URL of the LCP resource can be recognized by the browser.

- The loading process of the LCP resource should start at the same time as the loading of the very first resource after loading the HTML document. The charging sequence can be seen very clearly in a waterfall diagram. To solve this problem, the LCP resource should be preloaded with a high priority.

- To save connection time, the LCP resource should also be located on the same server as the HTML document if possible.

Load Time

The actual duration that the image loads. The loading time of the LCP resource should be reduced as much as possible without loss of quality.

- In order to optimize the actual LCP element, it should be compressed as much as possible and in a modern format.

- Small images can also be integrated as data images, for example. This completely eliminates the request. Provision of all images in modern formats such as WebP and AVIF (incl. fallback to original format for old browsers that do not yet support modern file formats). Generation of various image sizes so that images do not have to be scaled via the code. Provide a separate header image version for mobile devices, often a large stage where the same image URL is loaded in the mobile version as on desktop devices is one of the main causes of a poor LCP value. Use lazy loading for images that are not in the immediately visible area (also check for background images), but lazy loading must not be used for the LCP image. If the LCP resource is a GIF file, you should check whether a compressed video format would be the better choice.

- A CDN (Content Delivery Network) is also recommended so that the resource reaches the respective user as quickly as possible. A CDN has the disadvantage that a connection to another server must be established. This disadvantage can be reduced by a “Preconnect”.

- The high prioritization of preloading should only benefit the LCP resource (attribute fetchpriority=”high”). You should also check whether the number of resources to be loaded can generally be reduced or whether unimportant files can be loaded later.

- A well-configured browser caching also helps a lot, as it can completely eliminate the loading time when the file is called up a second time.

- If the LCP resource is a font, then the CSS property “font-display:swap” should be used for this. This ensures that the font remains visible during the loading process. Critical writings can also be preloaded.

Render delay (rendering delay)

The duration between the end of the loading process and rendering.

The LCP resource should be rendered immediately after loading. If this is not the case, it is blocked. This blockage can be caused by the following factors:

- CSS stylesheets or JavaScript that is not loaded asynchronously blocks rendering

- The loading process is complete, but the resource is not in the HTML DOM (it is added via a JavaScript)

- Hiding the element using a script or a stylesheet

- The execution of complex scripts blocks the rendering of the element

The problems mentioned above can be solved as follows:

- Inline embedding of stylesheets that block rendering or reducing the size of the CSS file. Stylesheets should only be embedded if the file size is smaller than that of the LCP resource. Otherwise, unused CSS should be removed from the file, the CSS should be minimized and compressed and non-critical CSS should be moved to a separate file.

- Asynchronous loading of JavaScript files in the HTML header with “defer” or “async” attribute in the script tag or JS inline of small scripts in the HTML header

- Use of server-side rendering so that the image is not first loaded into the source code by the client (the user’s browser) and no further script requests are necessary to display the content of the page.

- Splitting of large JS files to reduce the execution time and thus improve the LCP. JavaScript and CSS code can also be split for different page types/pages in order to load only what is really needed for the respective page.